Month: November 2025

Blog

High-Performance Servers for Crypto Exchanges and Trading Platforms

Milliseconds and credibility determine the life or death of cryptocurrency exchanges. Matching engines need to respond to events almost in real time, APIs must broadcast massive quantities of market data, and the system must withstand demand peaks while staying online. It is not just a best‑effort web workload; it is an ever‑running financial system. Here, the most reliable way to achieve low‑latency execution, sustained high throughput, and high uptime is to use high‑performance servers, which also provide the operational control required to meet emerging security and compliance demands.

The size of the market is the reason why the bar continues to go up. CoinGecko’s annual report shows that global crypto trading volume in 2023 was $36.6 trillion, a strong reminder that demand is not only huge but constant. And the expectations of performance resemble traditional finance. Industry guidance for digital‑asset venues focuses on microseconds‑to‑low‑milliseconds tick‑to‑trade tolerances (from price tick to order fill), and fairness demands tight dispersion around those figures.

Best Servers for Crypto Exchanges— 1,300+ ready-to-go servers — 21 global Tier IV & III data centers — 55+ PoP CDN across 6 continents |

What Does Low‑Latency Really Mean for Crypto Trading?

Low latency isn’t a single number; it’s end‑to‑end from the user edge to the matching engine and back, with the distribution (p50/p95/p99) mattering as much as the mean. Two imperatives stand out:

Geographical distance and clean routing. The geography and the quality of paths dominate the round trip times. Placing exchange entry points and caching of non critical assets in the edge can reduce tens of milliseconds to distant users. As illustrated in the plot below: the spread of entry points and content has the benefit of decreasing slope and variance of RTT with distance.

Illustrative latency vs. distance for exchange users (non‑measured model).

Compute and network stacks with low jitter. Each microburst of garbage collection, each context switch from a noisy neighbor, and each network‑path buffer burst adds dispersion that traders perceive as slippage. Industry guidance for digital‑asset platforms addresses this fair‑access constraint: HFT paths run in microseconds, broader flows in single‑digit milliseconds, and variance matters as much as the mean.

Low‑latency trading servers. Fast CPU cores, local NVMe and predictable NIC interrupts on dedicated servers provide a consistent time-of-program execution, unlike a hypervisor. Melbicom’s regional placement, together with anycast or fast‑failover BGP, allows participants to keep users as close to ingress as possible while maintaining routing policy.

Real‑time market data feeds. Low latency entails line-rate fans-out as well. Web‑based market‑data services must send order‑book deltas to millions of sockets on demand. Headroom is sufficient even with volatility spikes with 10/25/40/100/200Gbps server ports and multi-provider transit with Tier III/IV sites. The CDN PoPs should primarily carry static UI assets, snapshots, and historical files to bring bytes closer to users and offload origins.

How Do Dedicated Servers Handle Surging Trading Volumes?

Cryptocurrency activity does not increase gradually, but it comes with a bang. Traffic bursts of 5× can occur when token listings, liquidations, or macro headlines hit. Coinbase recorded an event where traffic increased fivefold in four minutes—faster than autoscaling—so error rates spiked briefly, offered here not as a history lesson but as a warning data point. The response of the design is multi-layered:

Provision of the peak, rather than the average. Publicly stated capacities for matching engines at major venues are on the order of ~1.4 million orders per second—an ambitious but useful engineering goal. This is achievable with dedicated servers that let teams tune cores, frequency, RAM, and NVMe layout for the hot path, without multi‑tenant interference.

Scale horizontally under load. They include stateless gateways, partitioned order books as well as stateless replicated market-data publishers, which distribute loads among nodes. Servers provide the predictable per‑node ceiling; orchestration provides the elasticity.

Keep bandwidth slack. Computing is less challenging than egress. Melbicom helps ensure message storms don’t back up queues with per‑server ports up to 200 Gbps.

Exchange matching engine hosting. The engine benefits from high clocks and cache‑resident data structures, low‑latency NICs, and predictable storage‑flush paths; specialized tuning (interrupt coalescing, user‑space networking stacks, CPU pinning) is possible because dedicated servers avoid the variability of shared hypervisors—an advantage when managing microseconds end to end.

Ensuring 24/7 Uptime in an Always-On Market Environment

Crypto doesn’t close. Financial outages are costly in the millions of dollars per hour; an industry investigation of trading floor downtimes puts the number at about $9.33 million/hour, which highlights the importance of the platforms which earn per trade. There are 3 pillars of uptime discipline, namely:

- Facility resilience. Tier III/IV hosting where power/cooling is redundant and carrier type is not limited to a single carrier minimizes the risk of hardware or utility servicing interrupting the service. According to the listing provided in the Melbicom estate, Tier III/IV locations such as Amsterdam, Los Angeles, Singapore, Tokyo and so on are combined with on-site engineer to swap components.

- Duplications on functions and lack of points of failure. Active‑active load balancers, clustered gateways, hot‑standby matching engines, and geo‑redundant databases mean a single server failure is not noticeable to the user.

- Operational control. Such features as dedicated servers and 24/7 support allow continuing and running rolling upgrades, implementing kernel patches, and carrying out hardware upgrades without downtime.

Security & Compliance: What Defines a Secure Crypto Exchange Infrastructure?

Compliance is inseparable from performance and security posture. Single tenant servers are provided, which provide isolation, ownership of the OS/kernel, and controlled change, and this results in easier process of auditing and hardening:

Secure crypto exchange infrastructure. Dedicated servers avoid multi‑tenant hypervisors and enable strict host‑level controls: hardened baselines, minimized attack surface, hardware‑backed keystore/HSM policies, and full‑fidelity logging. BGP sessions (with BYOIP) enable stable addressing and anycast/fast‑failover ingress control, supporting deterministic routing and policy enforcement.

Finally, the certified processes and facilities facilitate compliance. Melbicom is audited against the ISO/IEC 27001:2013 standard, which governs an information security management system (ISMS). The foundation and the selection of the regions and data residency help the teams to document the controls to the regulators.

Architecture Patterns That Matter the Most

Modern architectures center on a plan that maximizes determinism and control.

Regional ingress + local bursts. Place gateways and partial services near users, while the authoritative matching engine runs in one or more core regions. UI and read‑heavy endpoints should be close to traders using Melbicom’s 21‑site footprint and 55+ CDN PoPs, while hot paths stay on tuned servers.

Data‑path separation. Individual market‑data publishers and internal risk engines are isolated so a burst in one plane does not choke another service.

Network‑level control. Use BGP‑based anycast and regional failover so source IPs remain stable during maintenance, and prefer short, reliable paths to common client geographies.

Challenges and how high‑performance dedicated servers answer them

| Exchange challenge | Impact if under‑engineered | Dedicated‑server answer |

|---|---|---|

| Uptime & maintenance | Lost fees and reputation; high-risk upgrade | Tier III/IV site, hot standby and rolling upgrades ensure trading remains online. |

| Low‑latency execution | Unfair dispersion, slippage, dissatisfied market makers | Regional placement, high clock CPU, deterministic single tenancy; small latency dispersion. |

| Security & compliance | High breach/audit risk | Single tenant isolation, OS-control and ISO/IEC 27001:2013 certification/basis. |

| Volume spikes & fan‑out | Queue surge, timeouts, crumbly data feeds | Horizontal clusters on predictable nodes; up to 200 Gbps per server of market‑data bursts; and fast capacity additions. |

What Capacity and Throughput Targets Should an Exchange Set?

The objectives are based on the product’s different combinations and the final users, but anything that can be consumed by a mass market is useful. Binance cites a matching‑engine ceiling of approximately 1.4 million orders per second, a useful target for optimizing the most critical paths. A pragmatic planning model:

- Size order intake for the peak number of concurrent sessions plus 20–40% headroom.

- Size matching so peak bursts clear with no tail buildup.

- Size market‑data egress for worst‑case deltas, not averages.

- Provision storage for write spikes and fast‑recovery snapshots.

Melbicom can add capacity quickly with 1,300+ ready configurations and ~2‑hour activation windows, allowing teams to provision for the spike rather than the average.

A Practical Deployment Checklist

- Pin your latency budget. The p50/p95/p99 change targets that would be used in order entry, matching and publication of market‑data. Bring users close to exchange entry points via regional ingress.

- Right‑size the engine. Faster clock CPU, cache books and local NVMe, preferable in case of logs/ snapshots in single tenant nodes.

- Design for the surge. Pre‑warm extra gateways and publishers for storm conditions, and size egress for those peaks. Target capacity above the mean.

- Separate planes. Separate order entry, matching, market‑data, and admin networks; no planes are shared.

- Engineer for failure. Use active‑active load balancers, hot‑standby engines, rack‑ and region‑level replication, and tested cutovers.

- Control your routes. The BGP session (BYOIP may need to be used) should utilize stable endpoint routes and anycast and use fast routing.

- Prove compliance. Obtain a mapping of controls to ISO/IEC immense stretches of 27001:2013 ISMS and have proof at a fresh place.

Conclusion: Dedicated Servers are the Backbone of Trustworthy Crypto Trading

Exchanges compete on speed, reliability, and stability. It demands infrastructure with low‑jitter latency, high sustained throughput, and measured resilience—not just in normal periods but in the exact minutes when markets are most volatile. The data justifies the investment: the size of the crypto volumes, the assumption that the execution routes will take only microseconds, the real price of the downtime, etc., all indicate the single-tenant level of performance and the control of the network on the network level.

Dedicated servers, acting upon a globally dispersed, professionally operated platform, fulfill those needs and create architectural freedom: decide regions, actualize hardware to workloads, push content to the edge, and have your routes. Thus, day in, day out, trading venues keep books fair, UIs responsive, and reputations intact.

Build a low‑latency crypto exchange

Provision tuned, single‑tenant hardware in key regions to cut jitter, handle volume spikes, and meet uptime targets for your trading platform.

Get expert support with your services

Blog

Brazil Dedicated Server Buying Guide: Performance & MSA

Brazil has a huge user base, and with today’s demands for latency‑sensitive apps, basing a dedicated server locally is no longer wishful thinking or a nice added bonus. In 2023, over 84% of the population was online, creating more traffic in the area than ever before and making São Paulo one of the world’s most active interconnection hubs. IX.br traffic peaks above 31 Tb/s, with São Paulo peaking above ~22 Tb/s; hosting in Brazil cuts round‑trip times versus North American backhaul options such as the Seabras‑1 route (~105 ms RTT between New York and São Paulo), and in‑country paths are lower still.

Host in LATAM— Reserve servers in Brazil — CDN PoPs across 6 LATAM countries — 20 DCs beyond South America |

Brazil Dedicated Server Configuration: Recommendations

When purchasing a Brazil‑based dedicated server, there are four main considerations—CPU/GPU, storage, network, and terms/upgrade path—each of which needs to be validated against price‑to‑performance, redundancy, and DDoS exposure risks to make a wise infrastructure investment.

Matching CPU class to workloads

Forget the brand and pick the CPU required for the job. In other words, analyze your needs and the strengths:

| CPU Type | Key Strengths | Ideal For |

|---|---|---|

| AMD EPYC | High core counts, strong throughput/watt, abundant PCIe lanes, and memory bandwidth. EPYC has been shown in independent reviews to lead multi‑threaded throughput at similar power envelopes. | Virtualization (VM density), high‑concurrency SaaS, analytics, and mixed microservices. |

| Intel Xeon | Competitive per‑core turbo, integrated accelerators (e.g., AMX/QuickAssist) that can outpace EPYC on select code paths when software takes advantage. | Latency‑sensitive or accelerator‑aware workloads such as real‑time APIs, certain inference, compression/crypto, etc. |

| AMD Ryzen | High clocks (≈4.5–5 GHz boosts) without breaking the bank, providing an impressive per‑thread snap on 8–16 cores. | Budget‑restricted game servers, small web/app nodes. Cases where single‑thread speed matters more than socket scale. |

CPU Guidance:

- If you have many containers/VMs to consolidate, EPYC’s cores/PCIe lanes often provide better overall VM density.

- If the stacks you operate with require Intel accelerators or the highest turbo, scope Xeon SKUs. Then use those blocks and verify the relevant code paths for efficiency.

- If your work is lightly-threaded and you need the cheapest dedicated server in Brazil, Ryzen might be an idea, but remember to inform yourself, because while fast, there are ECC/support trade‑offs.

Do GPUs change unit economics?

The short answer is yes. Lots of work processes are GPU‑amenable, such as training/inference, vision, transcoding, HPC, GIS rendering, and data pipelines in modern times assume GPU offload. A single GPU server is able to replace racks of CPU‑only nodes working on tasks in parallel, which cuts latency considerably and power per unit of work. However, if you have no GPU‑eligible jobs, the upfront cost is unnecessary and many with lower budgets skip it. The wise approach is to keep an upgrade path available for GPUs later—plan for additional power/cooling and available PCIe slots.

Storage tiers: NVMe vs. SATA vs. HDD

Hot data should be stored on NVMe as it speaks PCIe directly and doesn’t have the same bottlenecking issues that arise with SATA/AHCI. A quick glance at the manufacturer’s guidance shows a night and day difference with SATA around ~550 MB/s while PCIe 4×4 NVMe shows delivering ~7–8 GB/s, with IOPS in the low millions and protocol overhead measured in microseconds. Operating in microseconds makes databases, searches, queueing, and any log‑heavy microservices much more efficient. SATA SSD, or HDD, can be reserved for cold tiers and backups.

Port Speed: Do You Actually Need 1/10/100/200 Gbps?

Monthly sums don’t really help; instead, you should think in peaks and use the following as a baseline:

- 1 Gbps can adequately cope with moderate traffic and the control plane.

- 10 Gbps is the typical choice for serious origin workloads because it supports multi‑TB/day transfer and has the headroom needed for spikes.

- 100–200 Gbps is usually reserved for large streaming, high‑fanout APIs, and big file distribution, and is often required when a single origin seeds a CDN or large clusters.

Unmetered vs. metered: Although transit in Brazil is improving all the time, the prices at scale are aggressive. An unmetered fixed setup is preferable where traffic is harder to predict and bursts go hand in hand with your field; if not, overages can be a nasty surprise. If your traffic is steady, then committing to a metered server might be economical.

Quality routing: With São Paulo’s IX.br scale, exceptional local delivery is possible with the right peering from providers. Just be sure to validate by testing and checking IP, traceroutes, and ensure the design is apt for multi‑uplinks, as the IX.br system has dozens of nationwide IXPs and an aggregate peak above 31 Tb/s, with São Paulo being the largest.

Brazil Deploy Guide— Avoid costly mistakes — Real RTT & backbone insights — Architecture playbook for LATAM |

|

How to Map Price‑to‑Performance without Compromising Resilience

For a dedicated server hosted in Brazil, you need a sensible evaluation worksheet:

- CPU class vs. utilization: EPYC’s price/performance often wins when cores are >70% busy during normal hours, but if p99 latency is dominated by a few threads, prioritize top‑turbo Xeon or Ryzen and tune the scheduler.

- NVMe everywhere for UX: All databases, caches, search indexes, and write‑heavy services should be kept live on NVMe, and SATA/HDD should be reserved for archival object stores or long‑tail logs.

- Bandwidth that changes UX: If origins saturate at 60% or more at peak, then step up the port as well as the commit. To ensure continent‑wide reach, terminate your origin in São Paulo, push to the edge with Melbicom’s wide CDN reach that covers São Paulo, Buenos Aires, Santiago, Bogotá, Lima, etc.

- DDoS risk and coverage: Attacks are prevalent, and it is worth remembering that the largest H1 event (919 Gbps) landed in‑country. So investigate your chosen provider’s capacity, scrubbing options, and incident playbooks, whether or not your surfaces are DDoS‑prone.

Which Contract Terms Matter the Most?

Flexible MSA, monthly billing. When entering new territory, it’s best to avoid long lock‑ins and seek monthly terms with providers that can ensure simple change orders for upgrades.

Speed to capacity. Pick providers with API‑ and panel‑driven deployment so you don’t have to wait weeks for parts.

Scale‑up and scale‑out. Prioritize platforms that allow you to scale up easily—adding RAM/NVMe/GPU and uprating ports—and to manage scale‑out with consistent sibling nodes across regions. Both patterns can be handled by Melbicom with high‑bandwidth server lines and identical builds across regions without the need to re‑platform.

Why Local Presence in Brazil Changes Performance Math

The user base in Brazil is large and connections are in place, but intercontinental RTTs are physics‑bound, meaning an origin in São Paulo can remove ~100 ms compared to average U.S. round‑trips, which makes a difference for cart conversion, stream stability, game tickrates, and fraud or KYC API responsiveness.

The ideal playbook:

- For transactional APIs and low‑latency trading: Employ Xeon (high turbo) or high‑clock Ryzen, keep storage on NVMe, use a 10–40 Gbps port, and add a CDN for static assets; maintain a warm standby node.

- SaaS with many concurrent sessions: EPYC (cores/VM density); NVMe + generous RAM; 10–40 Gbps; CDN for assets; warm standby node.

- Streaming / file distribution: EPYC or Xeon; NVMe cache tier; 40–200 Gbps at origin; CDN mandatory; segment traffic to protect origin.

- ML inference/vision: Xeon with AMX or EPYC + discrete GPUs depending on stack; NVMe scratch; 10–40 Gbps; plan GPU swap/expansion.

Tip: If you’re weighing a cheap dedicated server in Brazil, ensure it still checks the boxes that affect UX the most: modern disks, a realistic port, and access to a South American CDN.

Key Takeaways You Can Act On

- Put servers where users are: Brazil’s dense interconnection (31 Tb/s+) and direct U.S. routes (~105 ms RTT) make local origin hosting the default choice for responsiveness.

- Match CPUs to code paths: EPYC for thread‑rich concurrency and PCIe fan‑out; Xeon/Ryzen for high‑clock responsiveness or accelerator‑aware stacks. Validate with your own profiles.

- Make NVMe non‑negotiable: SATA caps near ~550 MB/s while PCIe 4×4 NVMe reaches ~7–8 GB/s with dramatically higher IOPS — the difference users feel.

- Buy the port you need for peaks: Most origins should start at 10 Gbps; grow to 40/100/200 Gbps for heavy media or fan‑out.

- Assume DDoS exposure: Brazil’s share of regional attacks and the 919 Gbps event argue for in‑country capacity and clear mitigation paths.

- Keep terms agile: Monthly billing, fast stock, and no re‑platforming to scale are worth more than a one‑time discount.

Thinking About Hosting in Brazil with Us?

Melbicom can help you finalize this blueprint as we are opening priority access for teams preparing Brazil rollouts: tell us your traffic profiles, exact technical specifications (CPU/GPU preferences, NVMe tiers, port targets, redundancy/DDoS posture), and timelines, and we’ll shape a tailored offer and pre‑stage capacity via our network and South American CDN — with first‑wave placement when São Paulo dedicated servers go live. Contact us.

Why Melbicom

What sets Melbicom apart is infrastructure freedom. We operate our own network and deliver high‑bandwidth solutions globally, with custom hardware setups almost anywhere and an international, remote‑first team built for speed. In practice that means deployment freedom (run anything, anywhere, at any scale), configuration freedom (tune CPU/GPU, NVMe tiers, and ports to fit your workload), operational freedom (no shared tenancy or lock‑in), and experience freedom (clean onboarding and transparent control). Share your expected volumes and failure domains now; we’ll validate routes, right‑size ports, and lock in the upgrade path so day‑one in Brazil is smooth, predictable, and reversible.

Plan your Brazil dedicated server rollout

Tell us your CPU/GPU, NVMe, and port needs. We’ll validate routes, size capacity, and prepare São Paulo servers with CDN options and DDoS coverage.

Get expert support with your services

Blog

Secure & Low-Latency Infrastructure for DeFi Platforms

Stablecoin rails and decentralized exchanges have turned into always‑on, high‑throughput markets. In the past year alone, stablecoins processed more than $27.6 trillion of on‑chain transactions—a milestone that eclipsed card‑network volumes. In October, DEX volume set a record above $613B as market share shifted toward on‑chain execution. In this environment, infrastructure becomes strategy: milliseconds determine if liquidations trigger on time, arbitrage captures spread, and user trust holds through volatility.

This article focuses on the two levers that consistently move the needle for DeFi operators: ultra‑low latency and robust security. We’ll show how dedicated, high‑performance servers—deployed across geographically distributed locations and paired with hardware‑backed key protection—deliver that edge.

Choose Melbicom— 1,300+ ready-to-go servers — 21 global Tier IV & III data centers — 55+ PoP CDN across 6 continents |

Why Does Ultra‑Low Latency Matter in DeFi?

Speed is an economic value. When slots or blocks tick quickly—Solana slots are ~400–600 ms—even modest network delays can mean missing a block window and the price that came with it. The same principle applies across L2s and parallelized chains whose finality targets are measured in sub‑seconds.

Three latency domains usually dominate:

- Mempool & propagation. If your transactions or oracle updates hit builders/validators after competitors’, your fill quality degrades. Private order flow paths and fast peers materially reduce that race (more on this below).

- User‑facing APIs. A few dozen extra ms. between a trader and your RPC/API endpoint can push them past a price move. For latency‑sensitive flows, in‑region endpoints and short network paths are the difference between taking and missing liquidity.

- Off‑chain components. Indexers, sequencers, matching engines, risk services—anything off‑chain behaves like a low‑jitter trading system. Here, exclusive access to CPUs, memory, and NICs matters. Public, shared endpoints routinely show p99 latencies in the 50–500 ms range, while dedicated pipelines land in the 5–50 ms band; colocation and private links push lower still.

Bottom line: every hop and every shared layer adds variance. Dedicated servers placed close to users and chain nodes, on clean network paths, cut that variance to size.

Designing Low‑Latency DeFi Hosting

The architecture is surprisingly consistent across high‑performing teams:

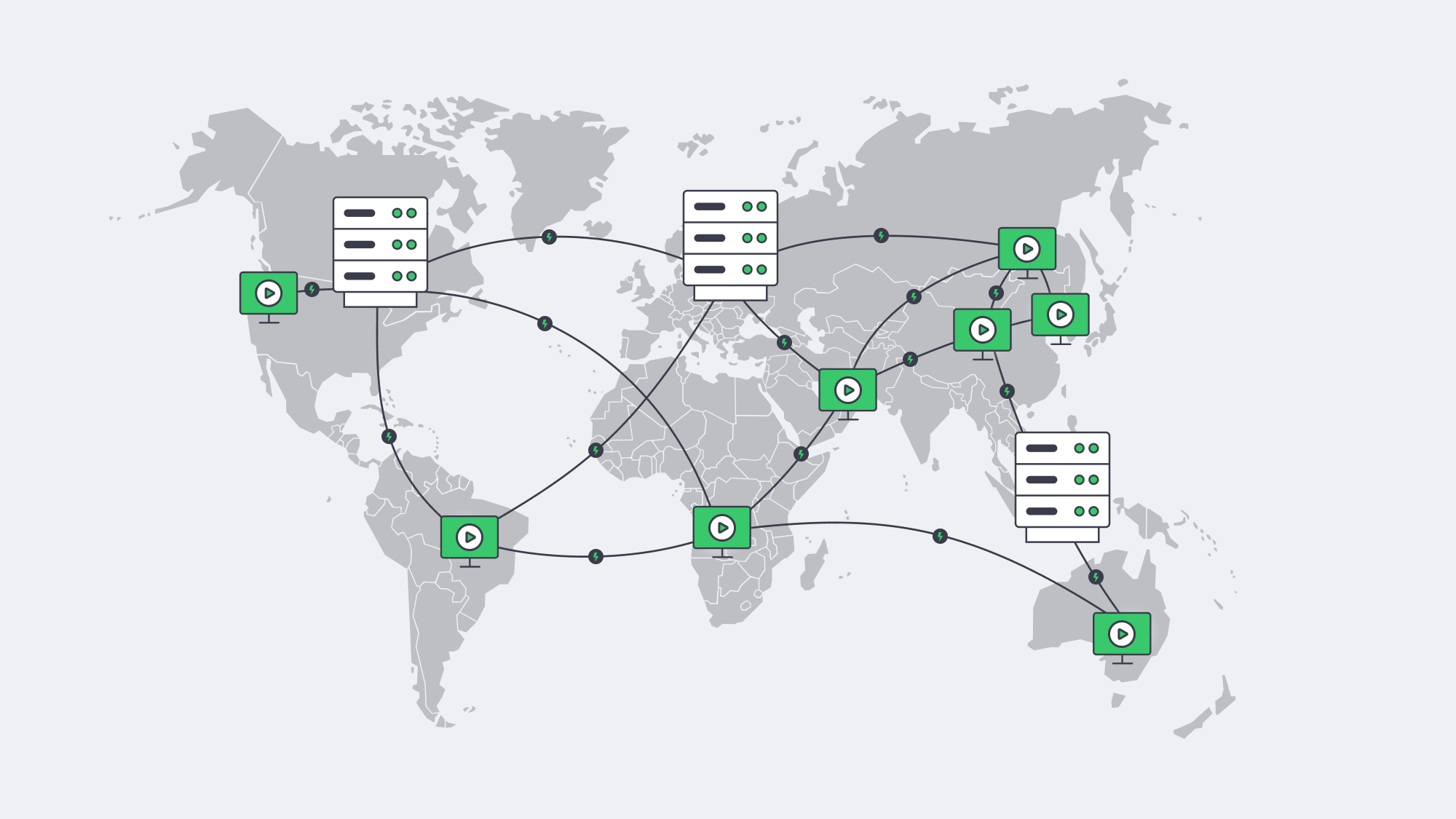

Geographically distributed nodes/servers. Place RPC, validators, indexers, and app backends in multiple strategic regions so users and services reach a nearby endpoint. This minimizes round‑trips and smooths block/gossip propagation. With Melbicom’s 21 Tier III/IV data centers on a 14+ Tbps backbone and 50+ CDN PoPs, teams place infrastructure precisely where latency is lowest and failover is clean. Melbicom offers 1–200 Gbps ports per server, which keeps spikes from queuing.

Direct, high‑quality connectivity. Keep routes short and stable. In practice that means peering and BGP sessions to hold constant IPs (useful for RPC/validator endpoints), and to steer traffic over better paths. Melbicom provides free BGP sessions on dedicated servers with BYOIP and community support so you can engineer for speed and consistency.

Edge acceleration where it helps. Wallet assets, ABIs, snapshots, and UI bundles shouldn’t traverse oceans on every request. Push reads to the edge via a 50+ PoP CDN, keep writes local, and right‑size origins to back hot regions.

Run your own nodes for critical paths. Public RPCs are convenient but multi‑tenant queues add jitter. Dedicated single‑tenant nodes eliminate shared contention and rate limits, unlocking deterministic fetch/submit times. Melbicom’s Web3 page details dedicated RPC for dApps and NVMe tiers for archival/full nodes with unmetered bandwidth—practical choices that shorten sync windows and keep p95 latency flat.

Hardening a Secure DeFi Platform Infrastructure

Performance without protection is a liability. Modern stacks are converging on two complementary controls:

1) Hardware‑backed key custody. Hardware Security Modules (HSMs) generate and store keys in tamper‑resistant hardware; keys never appear in server memory. Secure enclaves (confidential computing) restrict access to decrypted key material to attested code only—so even a root‑level OS compromise can’t exfiltrate secrets. This is now standard practice at institutional custody and increasingly common for DeFi admin/oracle keys. The effect is simple: keys stay out of reach, signatures remain valid, and governance or bridge operations become dramatically safer.

2) Private transaction paths to limit MEV exposure. Public mempools leak intent; searchers reorder and sandwich transactions. Private RPCs and relays route transactions straight to builders/validators, bypassing the public mempool and reducing front‑running risk. Wallets and dApps widely support these routes today. For sensitive flows—liquidations, large swaps, sequencer inputs—this is now table stakes.

Resilience is security, too. Fault‑tolerant DeFi platforms spread critical services across regions and vendors so no single facility or network interruption halts the system. Melbicom operates Tier III/IV facilities with 1–200 Gbps per‑server networking across EU/US/Asia, plus 24/7 support and fast hardware replacement—practical ingredients for active‑active designs and rolling upgrades without downtime.

Dedicated Servers for DeFi Performance: Where Does the Edge Come From?

Exclusive resources, predictable timing. Dedicated servers remove noisy‑neighbor effects. CPU cycles, RAM bandwidth, NVMe IOPS, and NIC queues are yours alone—critical for consistent block ingestion, indexing, and order handling. Shared instances might average fine; tail latency is what breaks liquidations and market‑data pipelines.

Bandwidth and topology you can engineer. With ports up to 200 Gbps and guaranteed throughput, you scale horizontally without egress shocks or surprise throttles. Add BGP with BYOIP to keep endpoint IPs stable through maintenance and region changes.

Full‑stack control. You choose OS hardening, kernel parameters, I/O schedulers, and monitoring. You decide how your enclaves and HSMs integrate. You pin inter‑DC replication on private links. That control translates into faster incident response and easier audits.

Global footprint, local latency. Melbicom lists 21 data centers across key hubs with a 14+ Tbps backbone and 50+ CDN PoPs, so you can put validators, RPC, and app backends near users and chain peers rather than pulling everything through a single region.

DeFi infrastructure challenges and solutions

| DeFi infrastructure challenge | Risk if unaddressed | Dedicated‑server solution |

|---|---|---|

| High network latency to users/validators | Slippage, missed blocks; competitors see/act first | Geo‑distributed nodes/servers with BGP‑steered routing and 1–200 Gbps ports reduce hops and queuing. |

| Shared/public RPC bottlenecks | Rate limits, multi‑tenant p99 spikes | Single‑tenant RPC and local full nodes on exclusive hardware; deterministic throughput. |

| Single‑region dependency | Regional failure = global outage | Active‑active regions in Tier III/IV DCs with fast failover and steady IPs via BGP/BYOIP. |

| Private key exposure | Irreversible loss of funds/governance | HSMs + secure enclaves isolate keys and signing; enclaves run attested code only. |

| MEV front‑running | Worse execution; failed transactions | Private RPC/relays send orderflow directly to builders, bypassing the public mempool. |

What Should Teams Actually Build?

- Prioritize “nearby” everything. Place RPC/validators/indexers in the same region as your users and counterparties; keep propagation paths short.

- Adopt private orderflow paths. Route sensitive transactions through private RPC/relays to curb MEV exposure and reduce revert waste.

- Use dedicated servers for deterministic performance. Aim for 5–50 ms end‑to‑end service targets on critical paths; eliminate multi‑tenant p99 tails.

- Make hardware do security work. Store keys in HSMs; run signers in secure enclaves; restrict management to isolated networks.

- Engineer for failure. Active‑active multi‑region, BGP for sticky IPs, private inter‑DC links for state replication, and 24/7 ops so failovers are boring.

Why This All Matters Right Now

Block production windows are short—hundreds of milliseconds on some chains—and on‑chain volume is rising. In practical terms, latency and security are revenue, not line items. Teams that deploy geographically distributed nodes/servers on dedicated hardware, and that seal keys in hardware, consistently ship faster execution and fewer surprises. The best DeFi platforms today feel instant and stay online through chaos because their infrastructure is designed to do exactly that.

For operators, the path is clear: own the critical path—where your latency and trust originate. That means single‑tenant servers close to users and chain peers, private orderflow routes, and hardware‑backed key protection.

Deploy low‑latency DeFi hosting

Launch dedicated servers with 1–200 Gbps ports, BGP sessions, and Tier III/IV data centers to cut latency and boost resilience for nodes, RPC, and backends.

Get expert support with your services

Blog

How Dedicated Servers Power Seamless Live Streaming

Live streaming at scale is ruthless on infrastructure. Millions of viewers can arrive within seconds; every additional millisecond of delay compounds into startup lag, buffer underruns, and churn. Decades of QoE research show the audience has little patience: abandonment begins when startup delay exceeds ~2 seconds, rising ~5.8% for each additional second—a brutal slope for real‑time broadcasts.

This piece examines how dedicated server infrastructure delivers seamless live streaming for events and real‑time broadcasts: why single‑tenant performance matters under concurrency stress, how edge computing and a CDN pull content closer to viewers to minimize lag, and which failover patterns keep streams online through faults.

Choose Melbicom— 1,300+ ready-to-go servers — 21 global Tier IV & III data centers — 55+ PoP CDN across 6 continents |

Why Is “Seamless” Live Streaming So Hard?

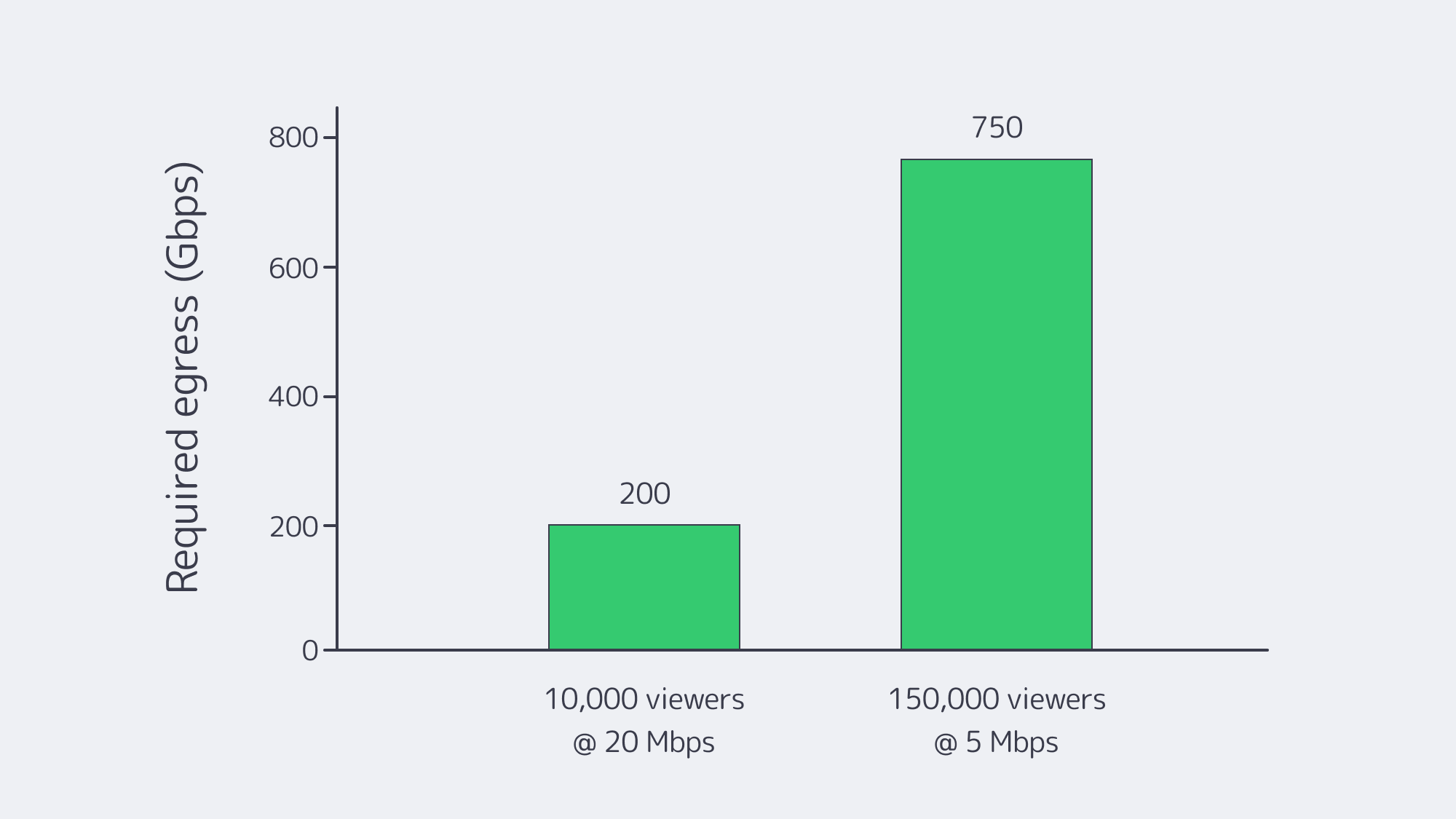

Concurrency and bandwidth. Peaks are spiky and simultaneous. If 10,000 viewers watch a 4K ladder at ~20 Mbps, origin egress must sustain ~200 Gbps instantly. At a million viewers, that arithmetic hits tens of Tbps—well beyond any single facility’s comfort zone. And the payloads are heavy: Netflix’s own guidance pegs 4K at ~7 GB per hour per viewer—petabytes evaporate quickly at audience scale.

Physics and protocol trade‑offs. The farther a viewer is from origin, the larger the buffer needed to ride out jitter. Traditional HLS/DASH favored stability over speed; newer modes (LL‑HLS/LL‑DASH) trade smaller chunks and partial segment delivery for 2–5 second end‑to‑end delays—only if the network and servers keep up.

Viewer intolerance for interruptions. The research is unambiguous: seconds of startup or rebuffering drive measurable abandonment and revenue loss. That’s why platforms that win on live events are the ones that engineer for steady latency under unpredictable demand, not just average throughput.

What Makes a Live Streaming Dedicated Server the Right Foundation?

Dedicated servers give streaming teams exclusive, predictable performance—no hypervisor jitter, no “noisy neighbors,” and full control of CPU, RAM, NIC queues, storage layout, and kernel networking. When tuned for live media (fast NVMe for segments and manifests; large socket buffers; modern congestion control), dedicated hosts push line‑rate 10/40/100+ Gbps consistently, which is the difference between a stable 2‑sec ladder and a stuttering one at peak.

Melbicom’s platform illustrates the point: 1,300+ ready‑to‑go configurations across 21 global data centers (Tier III/IV) enable precise right‑sizing and regional placement; per‑server bandwidth options reach up to 200 Gbps in select sites; and free 24/7 support plus 4‑hour component replacement keep incidents from turning into outages.

Cost and predictability also tilt in favor of dedicated as you scale: flat‑priced ports (rather than per‑GB egress) and the ability to drive them at capacity let teams provision for the peak rather than the median—critical when a broadcast goes viral.

Comparison at a glance

| Key factor | Dedicated server infrastructure for streaming | Cloud VM infrastructure for streaming |

|---|---|---|

| Performance under load | Exclusive hardware; stable CPU/I/O and line‑rate 10–200 Gbps per server when available. | Multi‑tenant variability; hypervisor overhead can add jitter under peak. |

| Latency & routing | Full control of stack, peering, and placement; regions chosen to minimize RTT to viewers. | Less control over routing; regions may not align with audience clusters. |

| Customization | Tune OS, NICs, storage, and encoders; add GPUs for real‑time transcode. | Constrained instance shapes; less low‑level tuning; GPU/storage premiums. |

Why it matters: LL‑HLS/LL‑DASH only hold low single‑digit‑second latency at scale when origin and mid‑tier nodes can serve small segments immediately and the upstream routes stay clean. That favors single‑tenant boxes on fast ports in the right metros.

How Does Edge Computing Cut Latency?

The single most reliable way to reduce live delay and buffering is to shorten the distance between content and viewer. A CDN/edge layer fetches each segment once from origin, then serves it from nearby points of presence—collapsing round‑trip time and stabilizing last‑mile variability. Industry analyses attribute step‑function latency gains to pushing compute and cache deeper into the network; the architectural goal is simple: keep media within a handful of hops of the player.

Melbicom’s CDN spans 55+ PoPs across 36 countries and 6 continents, with HTTP/2, TLS 1.3, and video delivery features (HLS/DASH). In practice, that lets teams park origins on dedicated hosts in a few strategic metros and let the edge take the fan‑out load—especially during flash crowds.

Low‑latency protocols amplify the benefit. LL‑HLS relies on small parts and chunked transfer; when those parts originate from an edge 15–30 ms away instead of a core 150–300 ms away, live delay stays in budget and rebuffer risk collapses. Apple’s LL‑HLS design notes and independent guidance consistently place live latency targets around 2–5 seconds for scalable HTTP‑based delivery—achievable only with strong edge proximity.

Where should the best streaming server sit?

- Place dedicated origins in the same continent (often the same country) as your largest live cohorts; use secondary origins where you see sustained daily concurrency.

- Put a CDN/edge in front; prefer footprints with PoPs near your audience (and peering with their ISPs).

- Keep origins lean: hot segments on NVMe; manifest/segment I/O localized; avoid mixing heavy analytics or ad‑decision engines on the same hosts.

Melbicom’s model—21 origin locations and a 55+ PoP CDN—is designed for that split‑brain: origins where compute belongs, distribution where distance matters.

Keeping Streams Online When Things Break

No single point of failure—that’s the operational mantra for live. The core patterns:

- Multi‑origin, multi‑region. Two or more independent origin clusters pull the same live feed and package segments concurrently. A CDN config lists both; on health‑check failure, fetches shift automatically.

- Smart routing. DNS steering or BGP anycast (where appropriate) places viewers at the nearest healthy entry point; a failed site simply stops announcing and traffic drains to the next‑closest.

- Encoder redundancy. Dual ingest pipelines (primary/backup) keep the feed flowing even if one encoder or network path fails.

- Rapid repair and human cover. When a host does fail, 4‑hour component replacement and 24/7 on‑call engineers mean the cluster’s redundancy window is short—and viewers never notice. Melbicom documents both practices for streaming workloads.

Testing matters: schedule controlled “chaos” during low‑stakes windows—kill an origin, yank a transit, drop an encoder—until failover is boring. That’s the only path to confidence on show day.

Low Latency and Stability During Live Events

A pragmatic capacity rubric for live events:

- Work backward from concurrency × bitrate. Example: a marquee match with 150,000 viewers at 1080p (~5 Mbps) implies ~750 Gbps sustained across your edge, plus headroom for ABR steps and spikes.

- Provision origin ports for miss traffic and first‑segment storms. LL‑HLS/LL‑DASH means many tiny requests; NIC interrupt coalescing, TCP autotuning, and modern CC (BBR) help maintain microburst stability.

- Keep buffers honest. Target 2–5 second live latency end‑to‑end; avoid masking slow servers with oversized player buffers, which trades perceived “stability” for unacceptable delay.

- Monitor what viewers feel, not just what servers see. Track startup time, rebuffers per hour, edge cache‑hit ratio, and per‑region RTT to the player. Set alerts on deviation, not just thresholds.

A note on payload planning: at ~7 GB/hour per 4K viewer, turnover can be staggering on cross‑region peak nights. Build object storage and inter‑PoP replication policies that keep hot ladders close to viewers and cold renditions out of the way.

Choosing the Best Video Streaming Server Configuration

- CPU vs. GPU for encode/transcode. Software x264/x265 on high‑clock CPUs is flexible, but real‑time multi‑ladder pipelines often benefit from GPU offload (NVENC/AMF) to cap per‑channel latency.

- NICs and queues. Favor 10/25/40/100 GbE with sufficient RX/TX queues and RSS; pin interrupts and leave CPU headroom for packaging.

- NVMe everywhere. Manifests, parts, and recent segments should live on low‑latency NVMe to avoid I/O becoming your bottleneck.

- Network placement beats pure horsepower. A “big” server in a far‑away DC is worse for latency than a “right‑sized” box near the viewers behind a good CDN.

Melbicom supports these patterns with per‑server bandwidth up to 200 Gbps, 21 origin metros, and a CDN with 55+ PoPs—so teams can pair the best server for streaming workloads with the right geography and delivery fabric.

Key Takeaways You Can Act On

- Place servers near demand. Stand up origins in the same continent as your biggest live cohorts; let the CDN handle the fan‑out.

- Engineer for the peak, not the average. Provision ports and hosts to absorb first‑minute storms; keep 20–30% headroom during events.

- Hit the latency budget. Design for 2–5 second live latency with LL‑HLS/LL‑DASH; verify via synthetic beacons from viewer ISPs.

- Remove single points of failure. Multi‑origin, multi‑region; automatic health‑checked failover; periodic game‑day drills.

- Watch what viewers feel. Startup delay and rebuffer minutes drive churn; know per‑region QoE every minute.

- Use infrastructure that scales with you. Melbicom offers 1,300+ configurations, 21 locations, up to 200 Gbps per server, 55+ CDN PoPs, and 24/7 support—the raw materials to keep latency tight and streams steady as you grow.

Powering a Seamless Live Experience?

Focus relentlessly on distance and determinism. Put deterministic performance (dedicated servers) where your encode, package, and origin cache must never stall; then erase distance with a CDN and edge placement aligned to your audience map. Use low‑latency ladders only when your end‑to‑end chain—from NVMe to NIC to route to edge—is tuned for microbursts. Finally, accept that failures will happen and practice the failovers until they are invisible to the viewer. Do that, and you’ll meet the modern bar for live streaming: ultra‑low latency, stable startup, and no buffering during the moments that matter.

Melbicom’s fit for live streaming

Build a low‑latency stack on dedicated origins and a global CDN. Our team can help size ports, place servers in the right metros, and get you online fast.